By Catherine Webb and Nicola Gujer

In October, the MBIE published an interesting document: the National Statement of Science Investment 2015-2025. It is intended to assess New Zealand’s strengths and weaknesses in research and development, and inform science funding for the next decade. As scientists, this document could impact our future, so it’s understandable that it has generated dialogue, especially about its use of data.

Among the NSSI’s many attractive graphics, this graph (Figure 1) in particular, which compares the impact of New Zealand research with that of a number of similar countries, has generated active discussion. A number of people have commented on social media about how poorly we do in multidisciplinary science compared to Denmark, Israel, Finland, Ireland and Singapore. We wanted to look a little deeper into what is going on.

This data is taken from SciVal, Elsevier – a tool for analysing publications and citations – which is part of the Scopus database. The data is also field-weighted, meaning it is normalised to make different fields comparative.

The “percentage of publications in the top 10% of citations by field” is used here as a measure of the excellence of a country’s academic output. The greater the proportion of Denmark’s maths papers that make it into the most cited 10% of maths papers worldwide, the more excellent Denmark is at maths.

This seems like a reasonable measure, and it’s easy to see New Zealand has a concerningly left-heavy position on the scale. But does the graph really say, as the NSSI claims, that we need to up our game in almost every field to be as ‘excellent’ as our comparative countries?

We set out to pull apart this data, go back to the source, and check out how reliable this information is.

The first issue we encountered was the field labelled “Multidisciplinary”. As you can see, in all six countries, this field out-streaks the others where citation excellence is concerned. This made us wonder what “Multidisciplinary” actually means here – are these small countries all so good at collaborating across different fields?

As it turns out, “Multidisciplinary” publications seem to be labelled according to the nature of the journal they are published in, not because of any cross-field collaboration within the paper. For instance, this category includes papers that are published in the multidisciplinary journals Nature and Science, as well as more unusual choices such as Vaccines and the Journal of Chaos and Bifurcation Theory. Because a few multidisciplinary journals like Nature and Science are extremely highly cited, the citation impact distribution of “Multidisciplinary” publications is skewed to the right, so field-weighting (which is basically an arithmetic mean) does a poor job of normalising the top 10%. Thus, the field receives a confusingly high excellence score.

The second issue is more significant. We wanted to know how stable and consistent these field rankings were across the years, so we went back to SciVal to check. We discovered two things: firstly, due to the dynamic nature of citations, the data we retrieved this November on publications from 2013 was already different to the data on the very same 2013 publications that was gathered earlier this year (for use in the NSSI).

Figure 2. Change in 2013 data on output quality ranking of science fields in New Zealand between data retrieval in November 2015 (right) and earlier in 2015 (left). (SciVal November 2015, NSSI)

In a matter of months, the ranking of many fields has changed significantly. This caused us to question whether the trend stabilises over a matter of years. Discovery number 2: no, it doesn’t.

Figure 3. Change in academic output quality ranking of New Zealand science fields over five years. (SciVal November 2015)

This is a graph to show how the order of which fields New Zealand is most ‘excellent’ in has changed unpredictably, and has not stabilised over 5 years. We can infer that the graph on page 19 of the NSSI may be an accurate snapshot of the data at the time it was taken (except for that remarkable multidisciplinary field), but the data moves so quickly that this type of graph cannot reflect any meaningful trends in the order of fields.

That said, the SciVal data does have some information to offer.

Figure 4. Percentage of small advanced economies’ academic output falling within the 10% most cited academic output worldwide, by field, averaged over five years. (SciVal November 2015)

This is a remake of the NSSI graph, using averaged data over five years (2010-2014). The order of fields is different from the graph that MBIE published, as we would expect from what we have seen so far. However, this is similar to the NSSI graph in that New Zealand on average scores notably lower than other small advanced economies.

So does the graph say what it claims to say? With a bit of tweaking, it could. It cannot give us any meaningful information about which fields NZ is doing best or worst in, because that can change within months. However, if expanded to more years than 2013, it does show that NZ consistently produces a lower percentage of globally top-quality papers than comparable countries.

Whether papers being in the top 10% of citations in the world is a good way to measure science excellence or not – that’s a question for another day.

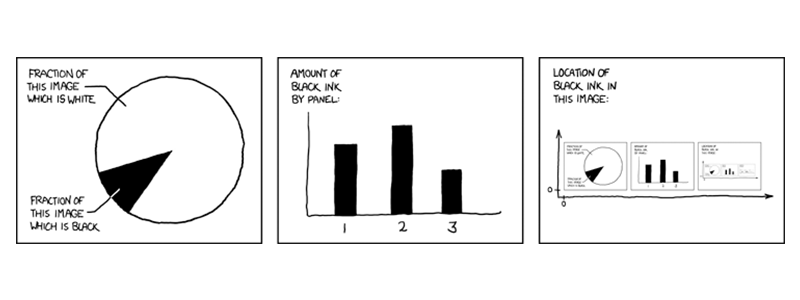

*Featured image by XKCD comics.